@khanhj

I wanted to touch base with you again about this, as its been 8 days and neither vNode role has moved from “waiting”, and for the 6 weeks I have been running them, that has never happened… I have always had at least 1 committee selection between the two within that period, so I wanted to verify everything looks correct. Below is outputs from docker ps, and the port forwarding scheme on my router.

vNode 1, default ports (docker ps output)

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS

NAMES

b3a66831c3fd incognitochain/incognito-mainnet:20200226_1 "/bin/sh run_incogni…" 9 days ago Up 3 days 0.0.0.0:9334->9334/

tcp, 0.0.0.0:9433->9433/tcp inc_mainnet

328edf14671f parity/parity:stable "/bin/parity --light…" 9 days ago Up 3 days 5001/tcp, 8080/tcp,

8082-8083/tcp, 8180/tcp, 0.0.0.0:8545->8545/tcp, 8546/tcp, 0.0.0.0:30303->30303/tcp, 0.0.0.0:30303->30303/udp eth_mainnet

sudo netstat -nltp | grep docker-proxy

tcp6 0 0 :::9334 :::* LISTEN 2097/docker-proxy

tcp6 0 0 :::9433 :::* LISTEN 2085/docker-proxy

tcp6 0 0 :::30303 :::* LISTEN 2110/docker-proxy

tcp6 0 0 :::8545 :::* LISTEN 2141/docker-proxy

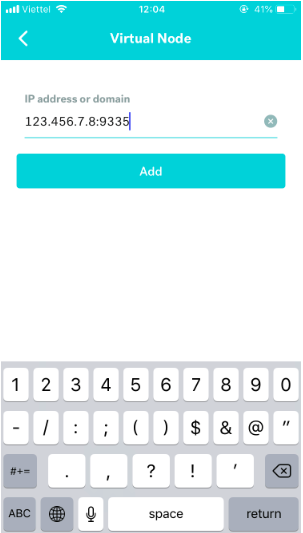

vNode 2, custom ports

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS

NAMES

730d5f255ec7 incognitochain/incognito-mainnet:20200226_1 "/bin/sh run_incogni…" 9 days ago Up 8 days 0.0.0.0:9335->9335/

tcp, 0.0.0.0:9434->9434/tcp inc_mainnet

e1199e4ca95c parity/parity:stable "/bin/parity --light…" 9 days ago Up 8 days 5001/tcp, 8080/tcp,

8082-8083/tcp, 8180/tcp, 0.0.0.0:8546->8546/tcp, 8545/tcp, 0.0.0.0:30303->30303/tcp, 0.0.0.0:30303->30303/udp eth_mainnet

sudo netstat -nltp | grep docker-proxy

tcp6 0 0 :::9335 :::* LISTEN 1845/docker-proxy

tcp6 0 0 :::9434 :::* LISTEN 1833/docker-proxy

tcp6 0 0 :::30303 :::* LISTEN 1858/docker-proxy

tcp6 0 0 :::8546 :::* LISTEN 1884/docker-proxy

vNode 1: 192.168.1.128

vNode 2: 192.168.1.129

port forwarding configuration:

This means that your node still is synching. Please see

This means that your node still is synching. Please see